The default configuration for windows azure websites and cloud services is to unload your application if it have not been access for a certain amount of time. It makes a lot of sense for

Microsoft to do this, as they save resources on by stopping infrequently accessed sites.

As an owner of one of these web sites, whether it’s hosted via a cloud service or in a simple azure website, it’s a pretty annoying feature, as the first user accessing your site after it has been unloaded, will experience a load time of 30 seconds or more, which by today’s standards are totally unacceptable.

Luckily, there is solution.

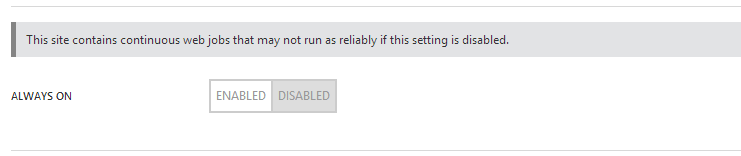

If your Azure Website is on the basic plan or better, the solution is a matter of switching on the always-on feature in the configuration of your site.

Update: The following method of having a continuesly running job ping the site every 5 minutes, to keep it warm, will not work on a free site anymore. Even the jobs are getting shutdown if the site is idle for more than 20 min, and apparently the idle detection mechanism is smart enough to filter out request made in the proposed way. I have posted an update to this article that lets you avoid the timeout after 20 min.

Unfortunately, this feature is only available to the sites on the basic plan, so if you are running a free or shared site, you have to look elsewhere for a solution. What the always-on feature does is simply ping your site every now and then, to keep the application pool up and running. This functionality is easy to mimic, so you have an always-on site on the free plan.

The way I choose to do it for my site http://statsofpoe.azurewebsites.net is to use the new Web Jobs feature that is in preview right now. The web jobs feature lets you run a script or executable as a continuously running job inside a website. What I did was to build a small executable that within a never-ending while loop every five minutes accesses the front-page of my website. This way my application pool is never unloaded and my site is always ready to serve users.

My code looks like this:

[csharp]

using System;

using System.Collections.Generic;

using System.Configuration;

using System.Diagnostics;

using System.Linq;

using System.Net.Http;

using System.Text;

using System.Threading.Tasks;

namespace SJKP.AzureKeepWarm

{

class Program

{

static void Main(string[] args)

{

var runner = new Runner();

var siteUrl = ConfigurationManager.AppSettings["SiteUrl"];

var waitTime = int.Parse(ConfigurationManager.AppSettings["WaitTime"]);

Task.WaitAll(runner.HitSite(siteUrl,waitTime));

}

private class Runner

{

private HttpClient client = new HttpClient();

public async Task HitSite(string siteUrl, int waitTime)

{

while (true)

{

try

{

var request = await client.GetAsync(new Uri(siteUrl));

Trace.TraceInformation("{0}: {1}", DateTime.Now, request.StatusCode);

}

catch (Exception ex)

{

Trace.TraceError(ex.ToString());

}

await Task.Delay(waitTime * 1000);

}

}

}

}

}

[/csharp]

And here’s my configuration file with a wait time of 300 seconds (5 minutes):

[xml]

<?xml version="1.0" encoding="utf-8" ?>

<configuration>

<startup>

<supportedRuntime version="v4.0" sku=".NETFramework,Version=v4.5" />

</startup>

<appSettings>

<add key="SiteUrl" value="http://statsofpoe.azurewebsites.net/"/>

<add key="WaitTime" value="300"/>

</appSettings>

<system.diagnostics>

<trace autoflush="true">

<listeners>

<add name="configConsoleListener"

type="System.Diagnostics.ConsoleTraceListener" />

</listeners>

</trace>

</system.diagnostics>

</configuration>

[/xml]

One thing I noticed when you try to upload the zip file containing all your files for your Web Job, be sure that the files are directly within the zip file and not in some subfolder, as the upload otherwise will fail.

Avoid automatic recycle of Azure Cloud Services Web Role

If you have a web role, it will suffer from the same problem as the azure websites. This is not due to Microsoft trying to save money though, but simply due to the default configuration of an IIS Application pool that is set to have an idle-timeout of 20 minutes. So if you don’t change this and your site is not accessed for 20 minutes it will automatically shutdown the work process, resulting in long load times for the first user to access the site afterwards. Another default feature that you might as well disable is the default application pool recycle that is set to happen every 1740 minutes.

The simplest way to change this that I have found is to include a script with your package, which is configured to run as a startup script, every time the role is restarted.

For this to work include the following script in a startup.cmd file that you place in a folder called Startup in your web role project.

[code]

REM *** Prevent the IIS app pools from shutting down due to being idle.

%windir%\system32\inetsrv\appcmd set config -section:applicationPools -applicationPoolDefaults.processModel.idleTimeout:00:00:00

REM *** Prevent IIS app pool recycles from recycling on the default schedule of 1740 minutes (29 hours).

%windir%\system32\inetsrv\appcmd set config -section:applicationPools -applicationPoolDefaults.recycling.periodicRestart.time:00:00:00

[/code]

Set the Copy to output directory to copy always so it becomes part of the package.

In the service definition.csdef for your Azure Cloud Service project you have to add the line

[xml]

<Startup>

<Task commandLine="Startup\Startup.cmd" executionContext="elevated" />

</Startup>

[/xml]

to ensure that startup.cmd script is called. The WebRole part of my ServiceDefinition.csdef files looks like the following:

[xml]

<WebRole name="StatsOfPoE.WebRole" vmsize="ExtraSmall">

<Sites>

<Site name="Web">

<Bindings>

<Binding name="Endpoint1" endpointName="Endpoint1" />

</Bindings>

</Site>

</Sites>

<Endpoints>

<InputEndpoint name="Endpoint1" protocol="http" port="80" />

</Endpoints>

<Imports>

<Import moduleName="Diagnostics" />

</Imports>

<ConfigurationSettings>

<Setting name="Microsoft.ServiceBus.ConnectionString" />

<Setting name="Microsoft.ServiceBus.QueueName" />

<Setting name="Microsoft.ServiceBus.HighPriorityQueueName" />

<Setting name="DataConnectionString" />

<Setting name="RefreshTime"/>

</ConfigurationSettings>

<Startup>

<Task commandLine="Startup\Startup.cmd" executionContext="elevated" />

</Startup>

</WebRole>

[/xml]

When this is done and you redeploy your solution, it will no long automatically recycle or shutdown = good stuff.